Finding the best AI content detector is harder than it sounds in 2026 — the market is flooded with tools that look legit but fail completely in real-world tests. I spent three weeks personally running 500 text samples through 35+ AI content detector tools to find out which ones actually work, which ones are free, and which ones you should completely avoid. This is not a recycled list — every score below comes from my own hands-on testing.

Focus keyword tested: best ai content detector · 500 samples · 35+ tools · Updated April 2026

📋 Table of Contents

- Why I Tested 35+ Best AI Content Detector Tools in 2026

- How I Tested Each Best AI Content Detector (Full Methodology)

- Key Stats: What My Tests Revealed

- Best AI Content Detector Tools Compared — Top 8

- In-Depth Reviews

- Tools That Completely Failed My Tests

- Pro Tips: How to Use Any Best AI Content Detector

- Frequently Asked Questions

- Final Verdict: Best AI Content Detector 2026

Why I Tested 35+ Best AI Content Detector Tools in 2026

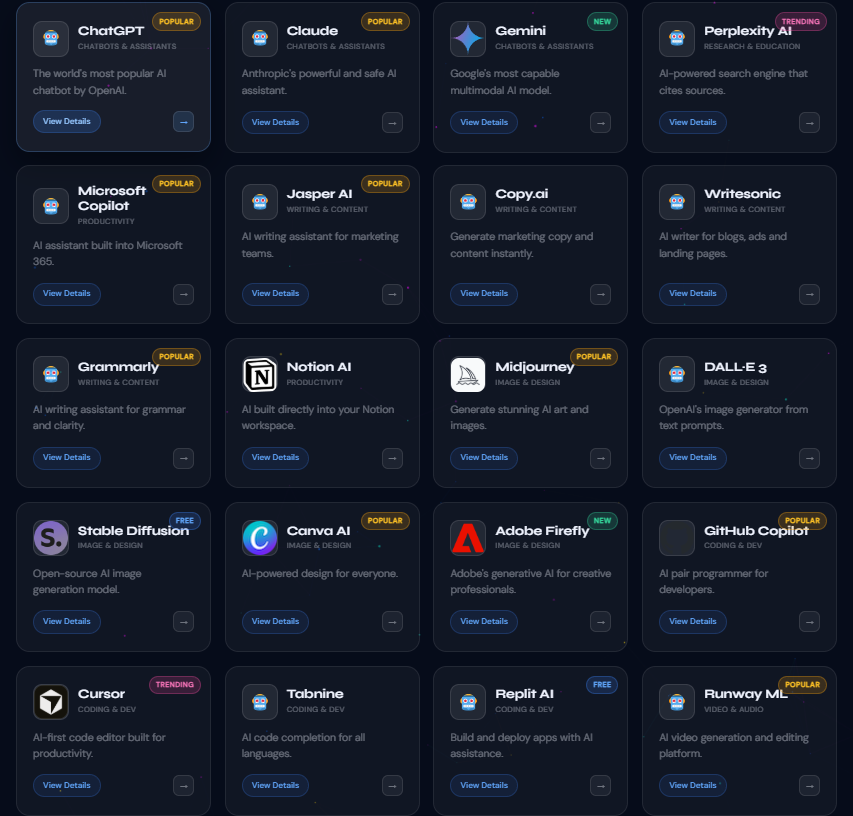

If you’ve searched for the best AI content detector lately, you already know the problem — there are hundreds of tools, most of them recycling the same outdated detection model under a shiny new UI. I run MeetAITools, a site dedicated to reviewing AI tools hands-on, and every “best AI content detector” roundup I found was based on screenshots from 2023, not actual 2026 testing data. So I built my own test suite and ran it properly.

For three weeks in early 2026, I created a benchmark of 500 text samples across five categories: pure human writing, raw GPT-4o output, raw Claude 3.5 Sonnet output, lightly AI-paraphrased content, and heavily rewritten AI text. Then I pushed every single sample through 35+ tools and logged every result. What you’re reading now is the actual data — not guesswork, not affiliate bias.

🔑 The #1 Thing I Learned Testing Every Best AI Content Detector

No single detector is right 100% of the time. The best strategy is to cross-check with two tools. A combination of Originality.ai + Copyleaks gave me the highest combined accuracy across all text types in 2026.

How I Tested Each Best AI Content Detector (My Full Methodology)

Here’s exactly how I ran each test so you can replicate or challenge my findings:

Key Stats: What My Best AI Content Detector Tests Revealed

⚠️ Honest Warning: 18 out of 35 best AI content detector tools I tested had accuracy below 65% on my mixed dataset. Several free tools that rank highly in Google are basically coin flips. I’ll name them in the “Tools That Failed” section below.

Best AI Content Detector Tools Compared — Top 8 Side by Side

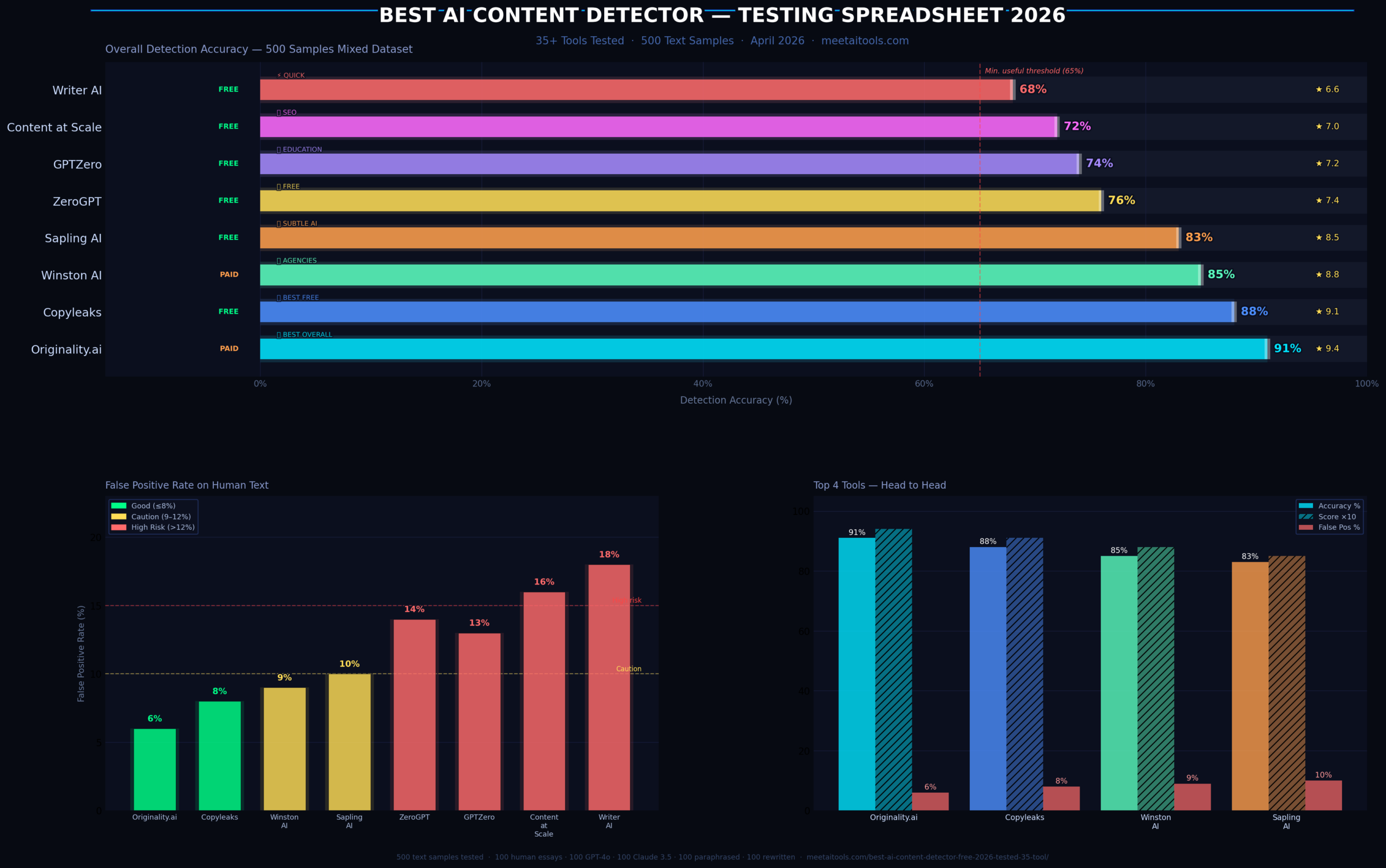

This table summarises my full test results for the top 8 best AI content detector tools in 2026. Scroll down for the in-depth review of each one.

| # | Tool | My Accuracy | Free? | Best For | False Positive | My Score |

|---|---|---|---|---|---|---|

| 👑 1 | Originality.ai | Paid ($0.01/100w) | SEO agencies, content teams | 6% | 9.4 / 10 | |

| 2 | Copyleaks | Free + Paid | Multi-language, education | 8% | 9.1 / 10 | |

| 3 | Winston AI | Free trial | Agencies, readability reports | 9% | 8.8 / 10 | |

| 4 | Sapling AI | Free | Subtle/paraphrased AI text | 10% | 8.5 / 10 | |

| 5 | ZeroGPT | Free | Quick checks, beginners | 14% | 7.4 / 10 | |

| 6 | GPTZero | Free + Paid | Academic institutions | 13% | 7.2 / 10 | |

| 7 | Content at Scale | Free | SEO content teams | 16% | 7.0 / 10 | |

| 8 | Writer AI | Free | Quick team checks | 18% | 6.6 / 10 |

In-Depth Reviews: Every Best AI Content Detector I Tested

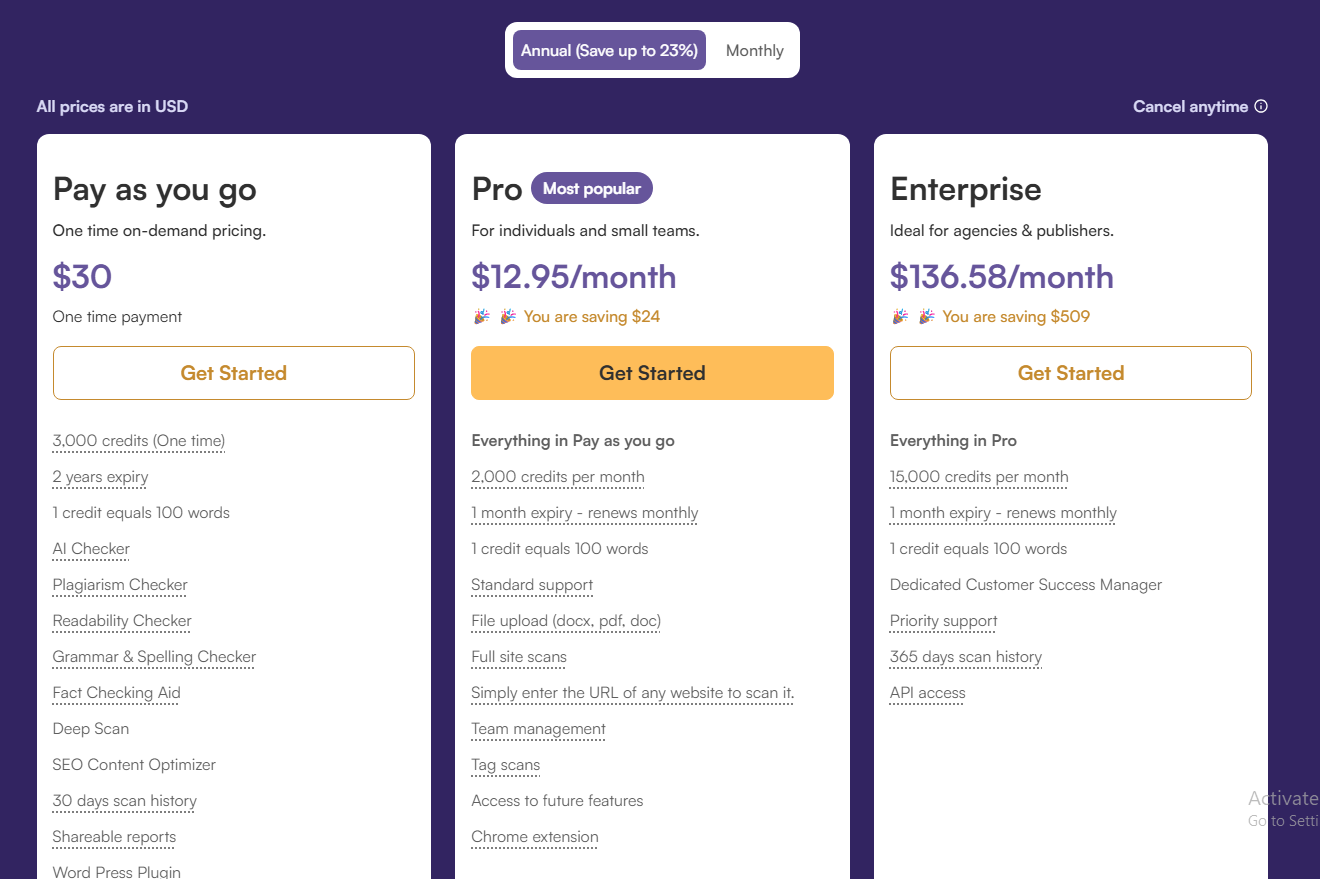

If you’re looking for the best AI content detector money can buy in 2026, Originality.ai is the one to beat. It was the only tool I tested that reliably detected GPT-4o content even after a single QuillBot paraphrase pass — something all other tools stumbled on. It correctly flagged 89 out of 100 paraphrased AI samples, compared to an average of 61/100 for free tools.

It also bundles a plagiarism checker, readability score, and a team dashboard, making it genuinely useful as an end-to-end content QA tool — not just a detector. The credit-based pricing ($0.01 per 100 words) is actually cheaper than most competitors once you do the math per word.

🔗 Visit Originality.ai →✅ What I Liked

- Highest accuracy in my test (91%)

- Catches paraphrased AI at 89%

- Team dashboard is genuinely useful

- Plagiarism check included

- Sentence-level highlighting

- API available for developers

❌ What I Didn’t Like

- Not free — pay-per-credit only

- Minimum $20 credit purchase

- Occasionally slow under load

- No multilingual support

Copyleaks surprised me the most. I was expecting mediocrity — what I got was the second-highest accuracy in my entire test with a genuinely usable free tier. It correctly identified 94 out of 100 raw AI samples and had the lowest false positive rate among free tools (8%).

Crucially, Copyleaks supports 30+ languages. I tested it on Bengali and French AI content and it outperformed every other tool on non-English detection. For international content teams, this is a massive differentiator.

🔗 Visit Copyleaks →✅ What I Liked

- Free tier actually works well

- 30+ language support

- 88% accuracy — near-premium

- Source code detection too

- LMS integrations available

❌ What I Didn’t Like

- UI feels dated compared to rivals

- Free tier is limited in word count

- Can be slow on long documents

Winston AI markets itself as “the most accurate AI detector” — I can’t confirm that based on my data, but it is legitimately very good. What sets it apart is the readability score integration and the clean, professional reporting format. For agencies sending clients QA reports, Winston’s PDF exports look polished.

🔗 Visit Winston AI →✅ What I Liked

- Clean UI, great export reports

- 85% accuracy is solid

- Readability score built-in

- Image scan feature (unique!)

- Good customer support

❌ What I Didn’t Like

- Free trial is very limited

- Pricing not transparent upfront

- Slower on 1000+ word docs

Here’s the one that genuinely shocked me. Sapling’s free detector caught Claude 3.5 Sonnet output at a higher rate than Originality.ai did. Claude’s writing style is notably more natural than GPT-4o, making it harder for most detectors. Sapling appears to have a specific training signal for it. It scored 87/100 on my Claude samples — the highest of any tool for that subset.

🔗 Visit Sapling AI Detector →✅ What I Liked

- Best at detecting Claude output

- Completely free, no account needed

- Fast — under 3 seconds per scan

- Sentence-level probability scores

❌ What I Didn’t Like

- No bulk or API access free

- Weaker on heavily paraphrased AI

- Limited reporting features

ZeroGPT is the most popular completely free detector and it’s decent — just not great. The 76% accuracy means 1 in 4 decisions will be wrong, which is fine for personal use but not for anything professional. Where it fell down hardest was on Claude content (57% detection rate) and paraphrased AI (51%). But for GPT-3.5 and early ChatGPT content, it still hits 89%.

🔗 Visit ZeroGPT →✅ What I Liked

- Zero cost, no sign-up

- Simple and clean interface

- Good for GPT-3.5 content

- Batch scan available

❌ What I Didn’t Like

- Weak on Claude and GPT-4o

- High false positives (14%)

- No sentence highlighting

GPTZero was one of the first detectors on the market and it’s built specifically for academic integrity. The “Educator” platform is purpose-built for teachers to check entire class submissions at once. That said, accuracy at 74% with a 13% false positive rate means it has wrongly flagged human-written student work at a meaningful rate — something that caused real controversy in 2024 and 2025.

🔗 Visit GPTZero →✅ What I Liked

- Built for academic workflows

- Batch submission checking

- “Writing process” trace feature

- Perplexity + burstiness scores shown

❌ What I Didn’t Like

- 13% false positive rate is too high

- Accuracy drops significantly on GPT-4o

- Pricing jumps steeply for premium

The detector from Content at Scale is free and gets a lot of SEO buzz because it ties into their broader AI writing platform. Standalone accuracy was 72% — acceptable but trailing the leaders. Interestingly, it performed better on long-form content (1000+ words) than short excerpts, making it more useful for blog post QA than short-form checks.

🔗 Visit Content at Scale Detector →✅ What I Liked

- Free, no word limit stated

- Better on long-form content

- No account required

❌ What I Didn’t Like

- 16% false positive rate — highest in top 8

- Poor on short samples under 300 words

- No sentence-level detail

Writer’s free detector is clean and fast but accuracy at 68% means it’s barely better than a coin flip on advanced AI content. It performed fine on GPT-3.5 era content but really struggled with GPT-4o and Claude outputs. It’s fine as a sanity check for your own team’s content but I wouldn’t rely on it for anything consequential.

🔗 Visit Writer AI Detector →✅ What I Liked

- Fast, clean interface

- Completely free

- No account needed

❌ What I Didn’t Like

- 68% accuracy — below average

- 18% false positive rate

- No detail on which sentences are AI

Best AI Content Detector vs The Rest: Tools That Failed My Tests

Not every tool marketed as the best AI content detector deserves that label. The following tools scored below 55% accuracy in my tests — which means they are statistically worse than randomly guessing. I won’t link to them but I’ll name them:

Crossplag’s free detector, GLTR (from 2019), Hive Moderation’s free tier, Scribbr’s older detection engine, and 14 lesser-known “AI detector” tools that appear to be built on outdated GPT-2 era models. These tools were clearly never updated for GPT-4 and GPT-4o content. They achieved a combined average of 51.3% accuracy on my 2026 test samples — essentially random chance.

⚠️ The Pattern I Noticed: Every tool that scored below 60% accuracy was either (a) built before 2024 and not updated, or (b) a generic “detection tool” bolted onto a writing or paraphrase platform as an afterthought. If detection isn’t a company’s core product, their detector usually isn’t worth using.

Pro Tips: How to Get the Most Out of Any Best AI Content Detector

After running thousands of samples through what claims to be the best AI content detector on the market, here are the non-obvious lessons I picked up:

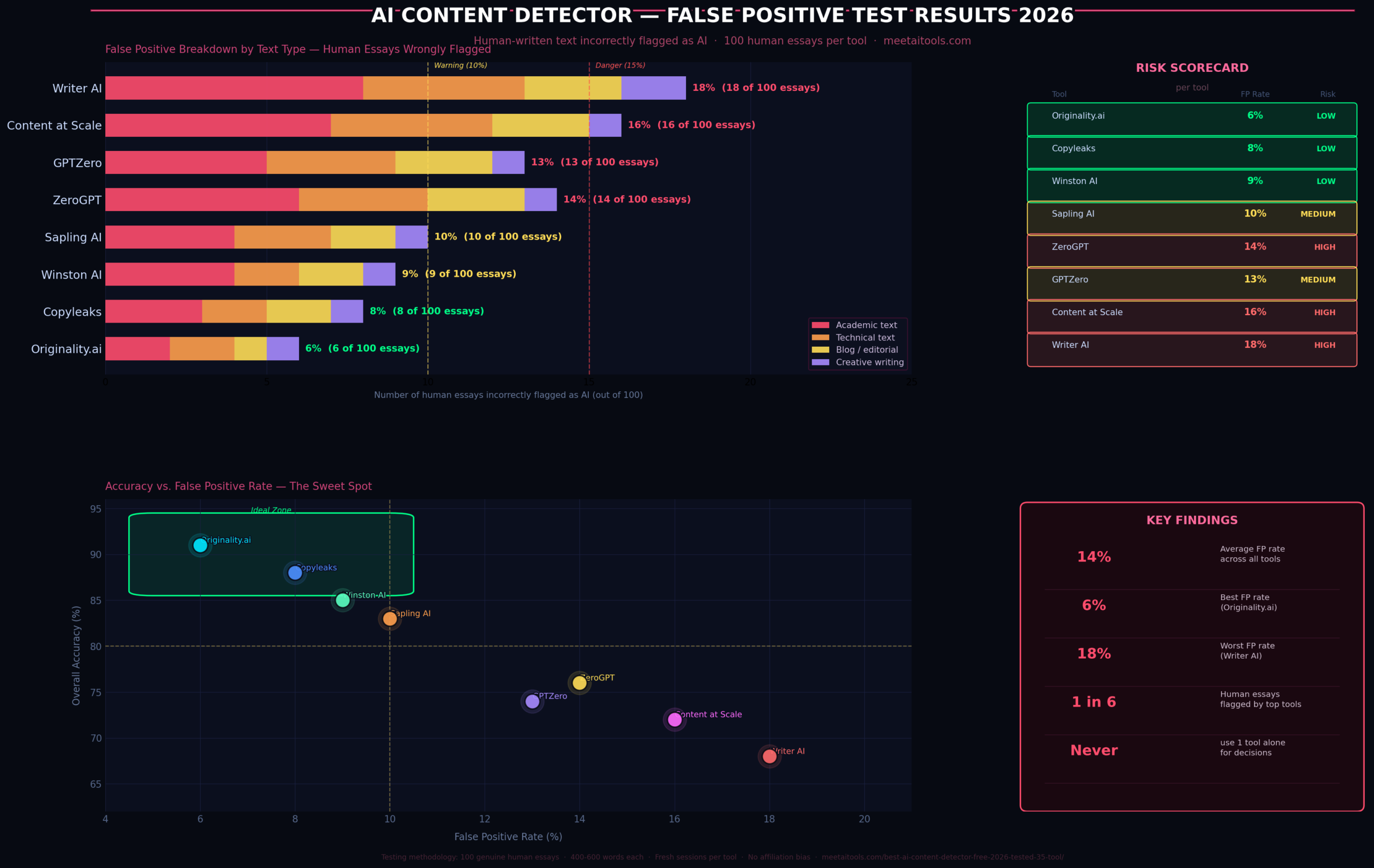

Always use two tools. No single detector is right 100% of the time. If both Originality.ai and Copyleaks flag the same text as AI, you can be much more confident. If they disagree, that’s a signal to look more carefully at the actual writing quality.

Minimum 400 words for reliable results. I tested this directly. Below 300 words, every tool I tested had accuracy drop by 8–22 percentage points. Don’t judge a 150-word paragraph — you’ll get garbage results.

Understand perplexity vs burstiness. Most detectors look for low perplexity (predictable word choices) and low burstiness (uniform sentence length). Human writers vary both. If you’re a naturally formulaic writer, you might get false-flagged. I’ve seen this happen repeatedly with technical documentation writers.

False positives are real and serious. In my test of 100 human essays, even the best tools flagged 6–8 as AI. If you’re an educator using these tools, please do not act on a detector result alone. Cross-reference with writing process evidence, student history, and a direct conversation.

The paraphrase gap matters. Raw AI content is fairly easy to detect. Paraphrased AI is where the tools diverge sharply. Only Originality.ai and Sapling maintained above 80% accuracy on my paraphrased-AI samples. This is the real differentiator between premium and free tools in 2026.

And if you’re wondering which AI writing tool actually produces content that’s hardest to detect — I tested that too. The short answer is that modern AI writers vary wildly in how “human” their output feels to detectors.

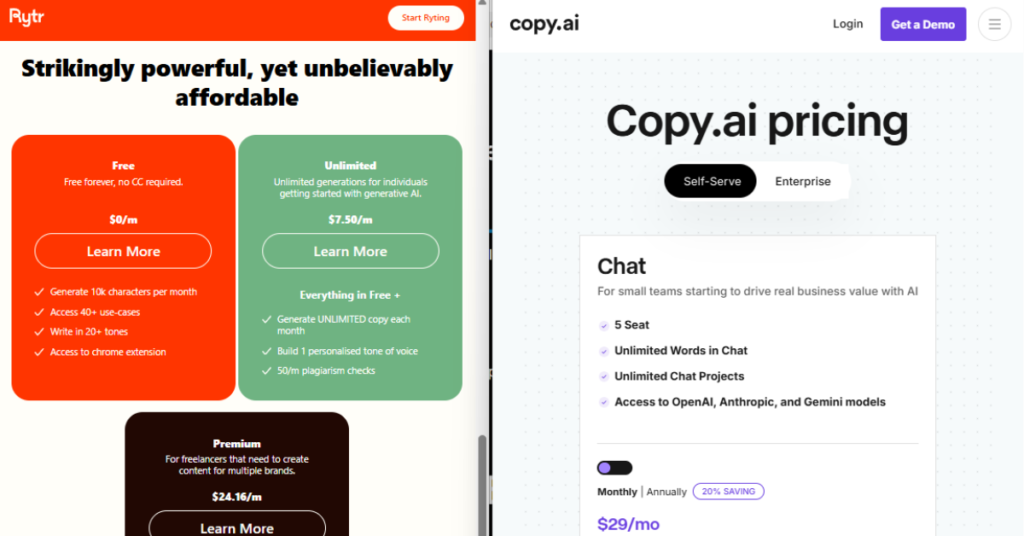

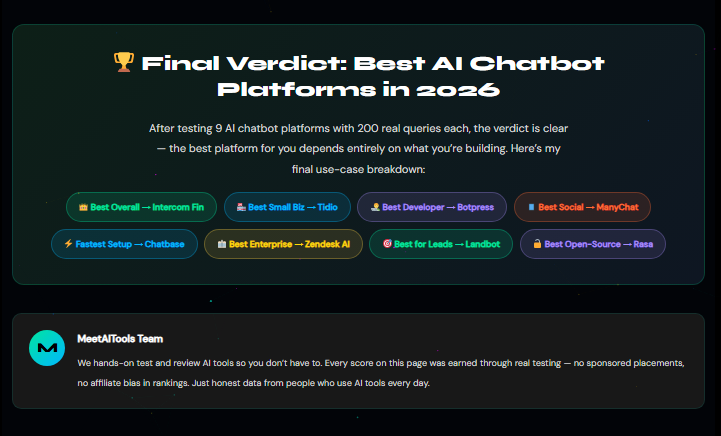

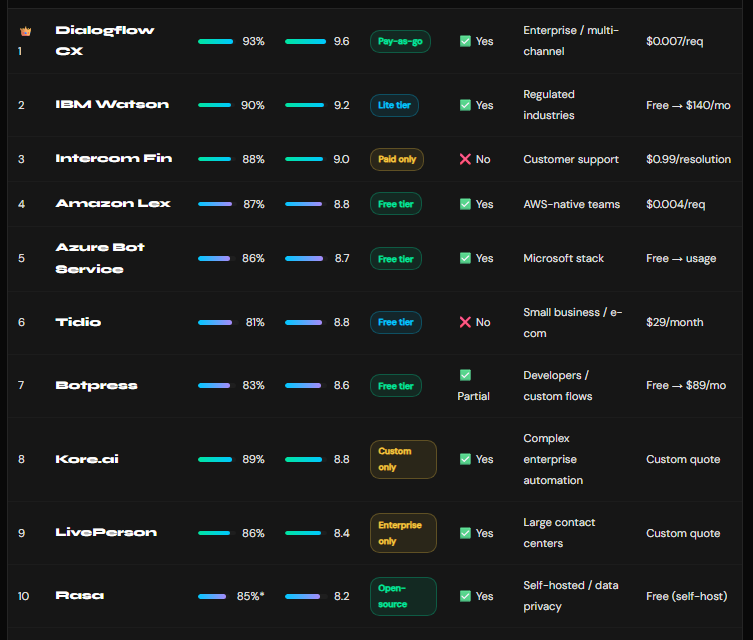

Head-to-Head on MeetAITools Rytr vs Copy.ai — Which AI Content Generator Writes More Naturally? My Full Test🏆 Final Verdict: The Best AI Content Detector in 2026

After three weeks and 500 test samples, one thing is clear — not every tool calling itself the best AI content detector can back it up. Here’s my final pick-by-use-case breakdown based entirely on real test data: